Operating Systems – Memory Management Fixed Partitioning,Variable Partitioning

In a multi-programming system, in order to share the processor, a number of processes must be kept in memory. Memory management is achieved through memory management algorithms. Each memory management algorithm requires its own hardware support.

In this Topic, we shall see the partitioning, paging and segmentation methods.

In order to be able to load programs at anywhere in memory, the compiler must generate relocatable object code. Also we must make it sure that a program in memory, addresses only its own area, and no other program’s area. Therefore, some protection mechanism is also needed.

1 Fixed Partitioning

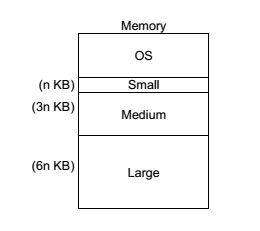

In this method, memory is divided into partitions whose sizes are fixed. OS is placed into the lowest bytes of memory. Processes are classified on entry to the system according to their memory they requirements. We need one

Process Queue (PQ) for each class of process. If a process is selected to allocate memory, then it goes into memory and competes for the processor.

The number of fixed partition gives the degree of multi-programming. Since each queue has its own memory region, there is no competition between queues for the memory.

Fixed Partitioning with Swapping

This is a version of fixed partitioning that uses RRS with some time quantum. When time quantum for a process expires, it is swapped out of memory to disk and the next process in the corresponding process queue is swapped into the memory.

Normally, a process swapped out will eventually be swapped back into the same partition. But this restriction can be relaxed with dynamic relocation. In some cases, a process executing may request more memory than its partition size. Say we have a 6 KB process running in 6 KB partition and it now requires a more memory of 1 KB. Then,

The following policies are possible:

- Return control to the user program. Let the program decide either quit or modify its operation so that it can run (possibly slow) in less space.

- Abort the process. (The user states the maximum amount of memory that the process will need, so it is the user’s responsibility to stick to that limit)

- If dynamic relocation is being used, swap the process out to the next largest PQ and locate into that partition when its turn comes.

The main problem with the fixed partitioning method is how to determine the number of partitions, and how to determine their sizes.

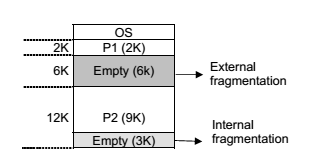

If a whole partition is currently not being used, then it is called an external fragmentation. And if a partition is being used by a process requiring some memory smaller than the partition size, then it is called an internal fragmentation.

In this composition of memory, if a new process, P3, requiring 8 KB of memory comes, although there is enough total space in memory, it can not be loaded because fragmentation.

2 Variable Partitioning

With fixed partitions we have to deal with the problem of determining the number and sizes of partitions to minimize internal and external fragmentation. If we use variable partitioning instead, then partition sizes may vary dynamically.

In the variable partitioning method, we keep a table (linked list) indicating used/free areas in memory. Initially, the whole memory is free and it is considered as one large block. When a new process arrives, the OS searches for a block of free memory large enough for that process. We keep the rest available (free) for the future processes. If a block becomes free, then the OS tries to merge it with its neighbors if they are also free. There are three algorithms for searching the list of free blocks for a specific amount of memory.

First Fit : Allocate the first free block that is large enough for the new process. This is a fast algorithm.

Best Fit : Allocate the smallest block among those that are large enough for the new process. In this method, the OS has to search the entire list, or it can keep it sorted and stop when it hits an entry which has a size larger than the size of new process. This algorithm produces the smallest left over block. However, it requires more time for searching all the list or sorting it.

Worst Fit : Allocate the largest block among those that are large enough for the new process. Again a search of the entire list or sorting it is needed. This algorithm produces the largest over block.

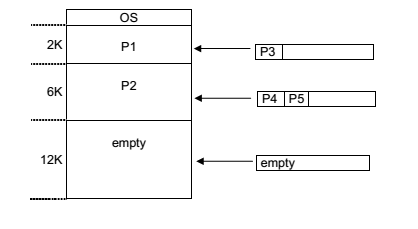

Example 1

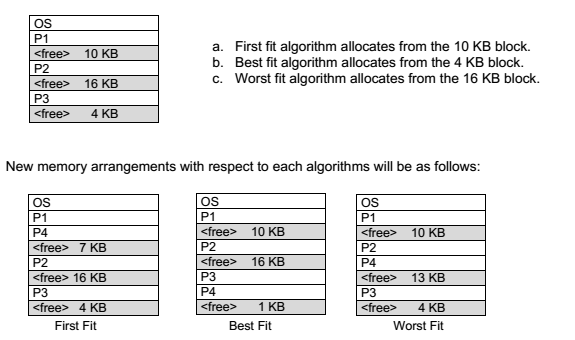

Consider the following memory map and assume a new process P4 comes with a memory requirement of 3 KB. Locate this process.

At this point, if a new process, P5 of 14K arrives, then it would wait if we used worst fit algorithm, whereas it would be located in cases of the others.

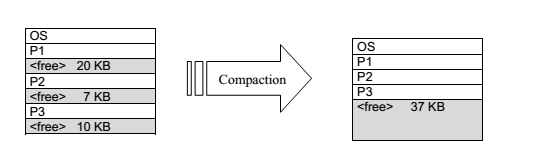

Compaction: Compaction is a method to overcome the external fragmentation problem. All free blocks are brought together as one large block of free space. Compaction requires dynamic relocation. Certainly, compaction has a cost and selection of an optimal compaction strategy is difficult. One method for compaction is swapping out those processes that are to be moved within the memory, and swapping them into different memory locations.